Evidence-Based Practice in Exercise & Nutrition: Common Misconceptions and Criticisms

March 03 2017

Introduction: Evidence based practice or EBM is an area that I have always been passionate about.In fact, speaking to the late David Sackett (the father of EBM) about EBM on a few occasions is something that I will always cherish. Without an EBP approach, scientific research in health and fitness is pretty much useless. Even if you are not a doctor or a fitness professional, if you are remotely interested in your health or fitness or nutrition, you should have a basic understanding of EBP. In the article below, I am collaborating with my friend and colleague Brad Schoenfeld, Ph.D. to answer some of the common concerns and questions about EBP. Brad has a strong practical and research background, which is not commonly seen in the fitness field.

We (Anoop Balachandran, Ph.D. & Brad Schoenfeld, Ph.D.) are glad that more and more people are demanding and applying evidence in the exercise and nutrition field. That been said, there remains a lot of misunderstanding and misconceptions about an evidence-based Practice (EBP). In this article, we will address some of the common misconceptions and criticisms of EBP. Here we go:

1. Why do we need EBP? Why can’t we just use anecdotal evidence or expert opinion?

In fact, we’ve used anecdote or expert opinion as ‘evidence’ to treat people throughout the history of medicine. But this approach clearly didn’t work well as shown by hundreds of examples of medical mistakes we made in the past. For example, smoking was ‘good’ for heath until studies showed otherwise; bloodletting was the standard medical treatment for almost 2000 years by the foremost doctors of the West, and so forth. In short, EBP evolved because anecdotal evidence or expert opinion were not producing ‘results’.

You can read more about it here:Why We Need an Evidence-Based Approach in the Fitness Field

2. So what is EBP/EBM?

The definition of EBM (Evidence Based Medicine) by David Sackett reads: “EBM is a systematic approach to clinical problem-solving that allows integration of the best available research evidence with clinical expertise and patient values”. This principle can be applied across many scientific disciplines, including exercise and nutrition, to optimize results.

2. What is evidence?

Many people wrongly assume that the term “best available evidence” in EBM/EBP is limited to research-based evidence. In fact, evidence can be obtained from a well conducted randomized controlled trial, an unsystematic clinical observation, or even expert opinion. For example, the evidence could come from a controlled trial, your favorite fitness guru, or a physiological mechanism. However, the critical point is that the importance or trust we place on the evidence differs based on the type of evidence. We will talk more about this as we talk about the evidence hierarchy.

3. What about values and preferences?

Every patient or client assigns his/her own values, preferences, and expectations on outcomes and decisions.

For example, some might place a high value on muscle growth, whereas others would value their general health as most important. Some would value building their upper body muscles more than their lower body muscles. Others may value the social aspect of working out at a gym more than the muscle and strength gains.

And rightly so, these personal decisions have no wrong or right and should be listened to and respected. The job of a fitness professional is to help clients achieve whatever goals they desire; we cannot impose our own values no matter how contrasting beliefs and opinions maybe.

4. What about clinical expertise? And what is the ‘art’ of EBP that people always talk about?

Clinical expertise is what many refer to as the art of EBP. So, does the art of EBP mean applying what has worked for your clients? Clearly not.

Clinical expertise involves basic scientific knowledge, practical expertise, and intuition to:

- diagnose the problem (for example, why can’t this person squat deep, how to correct exercise technique, why he/she is not gaining strength or losing weight.),

- search for the relevant research evidence (how many sets to gain muscle for an advanced trainee, or which exercise targets specific muscles) and critically analyze the research evidence for methodological issues (was the study in beginners, was the outcome measured relevant)

- understand both the benefits, the risks involved, and other alternative approaches to the goal (a Crossfit type workout might be motivating and improve general cardiovascular endurance, but has a high risk of injuries)

- alter the program based on the client feedback and results (reducing the number of sets or modifying the exercise (angles, ROM and do forth) for an older person or someone with pre-existing shoulder injuries• .)

- Listen and understand clients value and preferences, clearly communicate the risk, cost, benefits in a simple manner, and use a shared decision approach to come to a decision

And this is called the art of evidence-based approach. As you can see, it forms an integral part of EBP and no amount of research can replace it. Likewise, no amount of clinical expertise can replace research evidence.

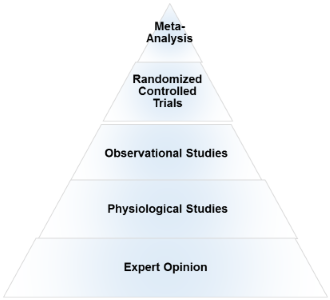

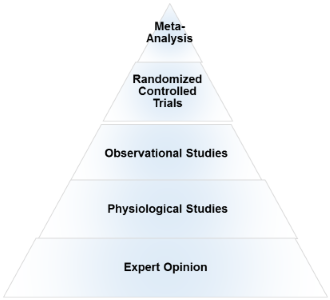

5. What is the evidence hierarchy? And why are RCT’s (Randomized Clinical Trial) at the top of the pyramid?

An evidence hierarchy is one of the foundational concepts of EBP. And there are three important points to keep in mind:

- First, as shown, the different types of evidence are arranged in an orderly fashion. As we go up the hierarchy, the trust or the confidence we place in the study results go up too. RCT’s are the most valid research design, as they allow the ability to infer causality. And expert evidence is the least trustworthy and occupies the bottom position. Meta-analyses- a collection or a group of RCT’s-are generally considered the highest form of evidence, as they synthesize the entire body of literature on a given topic and quantify the results based on a statistical measure of practical meaningfulness. Meta-analyses can be particularly important in exercise- and nutrition-related topics, as the sample sizes are often small and thus pooling the data across studies provides greater statistical power for inference.

- Second, it is important to note that depending on the quality of the study, an RCT can be downgraded, too. A poorly designed study will never provide a high level of evidence, and in fact can impair the ability to draw proper evidence-based conclusions. The hierarchy therefore is not set in stone.

- Third, there is always evidence. So the best available evidence is what is available and need not come from an RCT (Randomized Controlled Trial). But based on the type of evidence, our confidence in the results and our recommendations will differ accordingly.

6. What if there are no RCT’s? How do I evaluate a program or diet?

First, as mentioned before, there is always evidence. If there are no RCT’s, you simply move down the evidence hierarchy. But as you go lower in the hierarchy, uncertainty about the validity of the evidence goes up as well. Second, you also must compare the benefits, risks, cost, scientific plausibility, and other alternative programs before making recommendations. Below are a few examples where the absence of an RCT does not preclude recommendations.

- Example 1: If a client comes with a new program that uses 5 lb weights to increase strength, we know from basic science that without load progression, muscle and strength gains will be nil. Such a program would go against the most fundamental theory of muscle growth. So you can make a strong recommendation against the program, even without an RCT.

- Example 2:

Recently, the Ebola virus vaccine was used before conducting an RCT. How is that possible? Here is a classic example of weighing the benefits, risks, alternative approaches, and making a strong recommendation with weak evidence. In this case the risk is death, the benefit is obvious, and there are no alternative approaches. Thus, the risk/reward strongly favored giving the vaccine. And 99% of the informed patients would agree with the recommendation.

>Example 3: If a client wants to try the Xfit program, you can convey the lack of studies (weak evidence), the risks involved, the time required for learning the right technique, and give other programs which are in line with her/his goals. If he/she still wants to do it, he/she shouldn’t be critiqued for their decision.

- Example 4:

An observational study shows that eating meat raises cancer. Considering observational studies are lower in the hierarchy no matter how well the study is conducted, recommendations cannot be more than just suggestions.

What if there are no studies and my client wants to try a new program?

As previously noted, if a person understands the uncertainty due to the lack of studies or weak evidence, availability of alternative programs that fit his/her goal, the cost, and risks, he/she can make an informed personal choice. Keep in mind that majority of the questions in exercise and nutrition are of weak evidence. In fact, it is the same for the medical field too. But what is important is to clearly know and convey what your recommendations are based on.

7. There are a lot of factors like genetics, diet, motivation that can influence your results. A study hence…

Many people are unaware that in a randomized controlled trial, the randomization serves a crucial purpose: The randomization ensures that both the known variables and unknown variables that can affect muscle growth or strength are equally distributed into both groups. That is, if there are unknown genetical factors that can drive muscle growth, it is highly likely these genetically gifted individuals will be distributed evenly. This is the reason why RCT are considered to be the gold standard to study cause and effect. Hence, the results of the study can be pinned to the intervention or treatment

8. There are numerous problems with scientific study. So you cannot use the results of a study to train your clients?

Yes. But one of the basic steps in EBP is to critically analyze the study: If the study has methodological issues or has a different population than your client, you downgrade the evidence accordingly and lower your strength of recommendations.

9. Most of the studies in bodybuilding/strength training are on untrained individuals.

Yes. And rightly so, caution should be used when extrapolating recommandations to trained individuals. Exercise science is a relatively new field and studies in trained individuals are small in number, but accumulating. Generalizability (i.e. the ability to apply findings from a study to a given population) must always be taken into account when using research to guide decision-making.

10. I don’t care about “why” it works or the science behind. All I care about are results.

As previously mentioned, EBP evolved to get better results. It didn’t evolve to explain how or why a treatment works. There are 1000’s of life saving treatments and drugs where the underlying mechanism(s) are just unknown.

11. Studies are looking at an average of the sample. There is a lot of individual differences.

Yes. In fact, n=1 studies occupy the top of the evidence hierarchy because it applies to the specific individual in question. But these are hard and almost impossible for certain outcomes like muscle growth or disease prevention. There are two concerns with so-called trial and error method that is often talked about.

- First, even if you gain benefits with a certain program, in many cases, it is extremely hard to figure out what was the variable that made the difference. Was it the specific exercise, the change in diet, the placebo effects, genetics, or some unknown variable?

- Second, it may not be clear if you are indeed making an improvement depending on the outcome. For example, gains in muscle come very slowly for trained individuals (like years for a several pounds). Hence, you will have to run a program for a few years to see if it works or not. However, controlled research often uses measures that are highly sensitive to subtle changes in muscle mass, and thus can detect improvements in a matter of weeks.

12. The program worked for me!

What was the outcome measure? Strength, muscle growth, weight loss? What are you comparing against? Against your previous results? What was the magnitude of the benefit? Without knowing answers to these questions, the meaning of the word ‘worked’ is unclear.

Further, if it indeed worked, we still don’t know what made it work, or if it will work for someone else. So your personal anecdotes are often fraught with problems and unfortunately mean very little. And importantly, just because something “worked” doesn’t mean that another approach might not work better.

13. This X supplement was shown to increase muscle growth in an animal study. Should I use it?

Research in animal models is almost at the bottom of the evidence hierarchy. It is very weak and hence the uncertainty is high, and deserves no greater than a weak recommendation. Although animal models can serve an important purpose in preliminary research, evidence based practice should rely primarily on human studies when developing applied guidelines.

I saw a supplement study which showed a statistically significant weight loss. Can I use that supplement for my client?

No, you also have to look at how much weight the subjects lost. The term “significance” is a function of the probability of results occurring by random chance; it is not necessarily related to the magnitude of the effect. Provided a large enough sample size, results of a study can be statistically significant even with just a 1 lb weight loss over a 1 year period. This is known as ‘clinical significance’.

Would you take a supplement to lose 1 lb in a year? Depending on the cost, the burden of taking a pill every day, and how much you value weight loss, you may or may not.

14. EBP does not consider a science-based approach.

EBP does consider a science-based approach. A science-based approach provides strong evidence when the program or treatment violates fundamental principles or universal laws. For example, homeopathy.

However, EBP does not support evidence just based on biological plausibility or mechanistic evidence. For example, if a new diet tells you to eat as much as you want to lose weight, it goes against fundamental laws of thermodynamics. You do not need an RCT to make strong recommendations against this diet

15. “This house believes that in the absence of research evidence, an intervention should not be used” This was the motion of a debate which took place at the end of the recent PhysioUK2015 Conference in Liverpool.

As you know by now, EBP does not rely on RCT’s. To quote the famous saying in EBP: “There is always evidence”. It is an unfortunate misrepresentation of EBP/EBM to assume that without RCT’s, a treatment cannot be recommended. For example, smoking has perhaps the greatest detrimental effect on health of any social habit, and health-based organizations universally recommended against its use. But we do not even have even a single RCT on smoking!

Effects of smoking are from observational studies. But since the magnitude of harm is very high, it upgraded in the evidence pyramid. Once again, this shows why the hierarchy is not set in stone.

16.‘Parachute use to prevent death and major trauma related to gravitational challenge’. This is the title of the paper published in BMJ. The paper satirically argues that parachute use has not been subjected to rigorous evaluation by using RCTs’ and therefore has not been shown to save lives. Critics of EBP have used this as a criticism of EBP and the reliance of RCT’s.

EBP has always maintained that RCT’s are not required when the magnitude of benefits is very high.

For example, insulin injection for diabetes, Heimlich maneuver, and anesthesia are all examples of treatments where the magnitude of benefit is very high, and hence RCT’s are not required nor asked for.

17. I do not have enough knowledge to critically analyze studies.

There are a few resources in the field of exercise and nutrition that critically appraises the evidence for you. They are www.alanaragon.com, www.strengthandconditioningresearch.com, www.weightology.net.

In closing, we hope the article has helped you better appreciate and understand this simple framework called evidence based practice or evidence based medicine. EBP is currently the best approach we have to make decisions related to health, fitness or strength and conditioning.

A good EBP practitioner should have a strong understanding of both the practical and the scientific aspects of exercise and nutrition; and more importantly, an untiring commitment and empathy to your clients and their values and preferences.

Related Articles

| Wed April 12, 2017

Meta- analyses mean NOTHING WITHOUT DEEP EXPLANATION. Medicine is notorious for lots and lots of “studies.” The sheer amount means NOTHING if they do not EXPLIAN.

Science progress demands explanation. This is how we came up with the axial tilt theory of seasons vs. the ancient Greeks’ myth of seasons. The latter WAS testable but it was easy to vary and did not offer a deep explanation which was hard to vary.

PS. Energy is NOT stuff, nor a thing. It is merely a number, a useful number. Matter and energy are NOT related. People eat FOOD, which is MATTER, CARBON ATOMS.

Anoop | Fri April 14, 2017

Thank you Jane for the comment.

Couple of points:

1. In fact, EBP evolved because we had a lot of great explanations of how a treatment works or benefits. But later studies showed we were completely wrong. Examples include, hormone replacement therapy, drugs for arrhythmia, Sudden death infant syndrome and so forth.

2. Also, ‘explanations’ come from the same studies that you critiqued. There is no “bible” that we have hidden somewhere that explains everything and can be refereed to when we need help.

Does mechanistic evidence/plausibility makes the case stronger? Of course.

Mind you, none of the “well-designed and well done done” homeopathic studies have ever showed a benefit over placebo. The key word here is study quality.

| Wed May 24, 2017

I really enjoyed the post. There is too much based on nothing these days, thank you for spreading the word! Have a good one

>